Transformers

nlp

transformers

attention

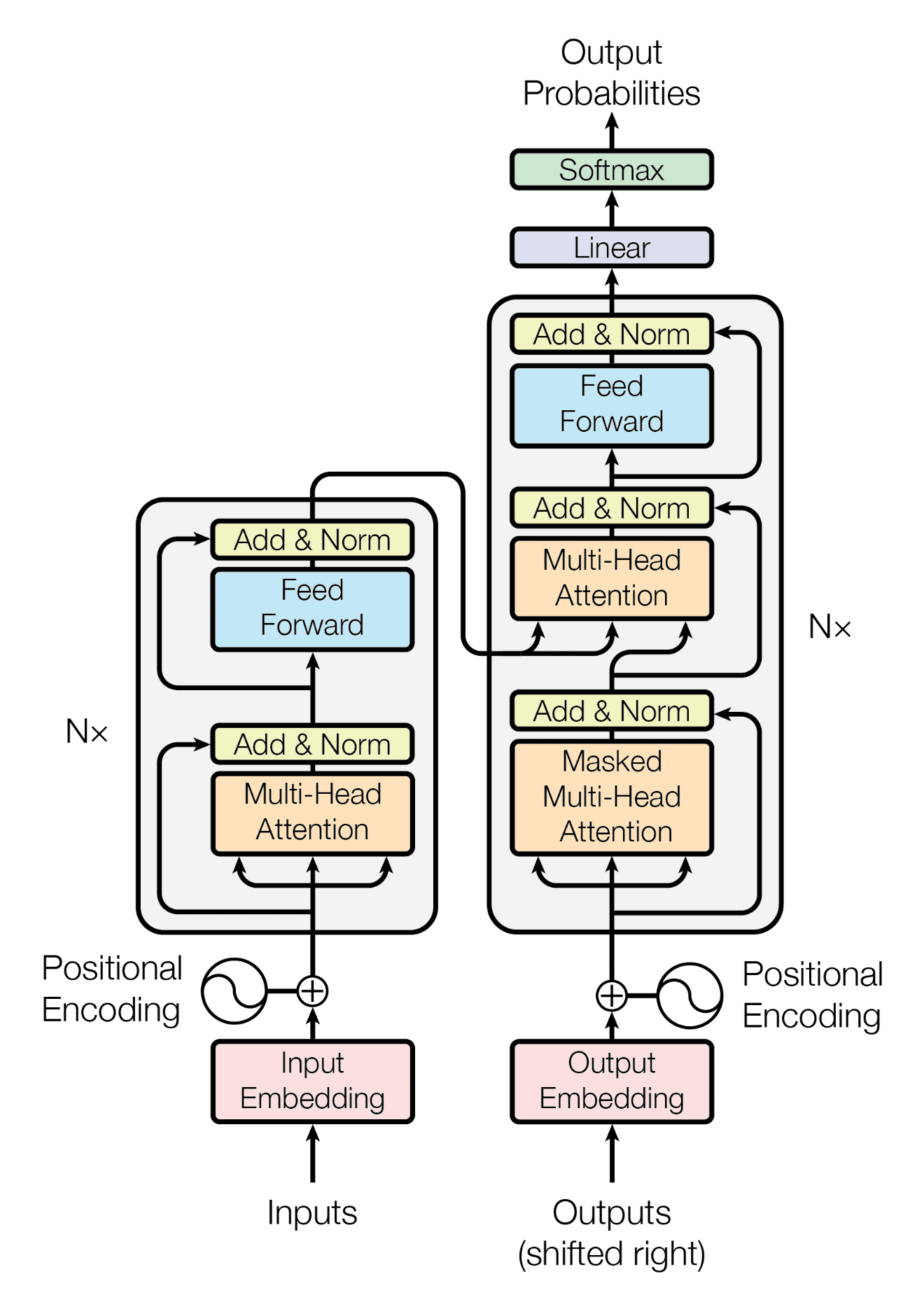

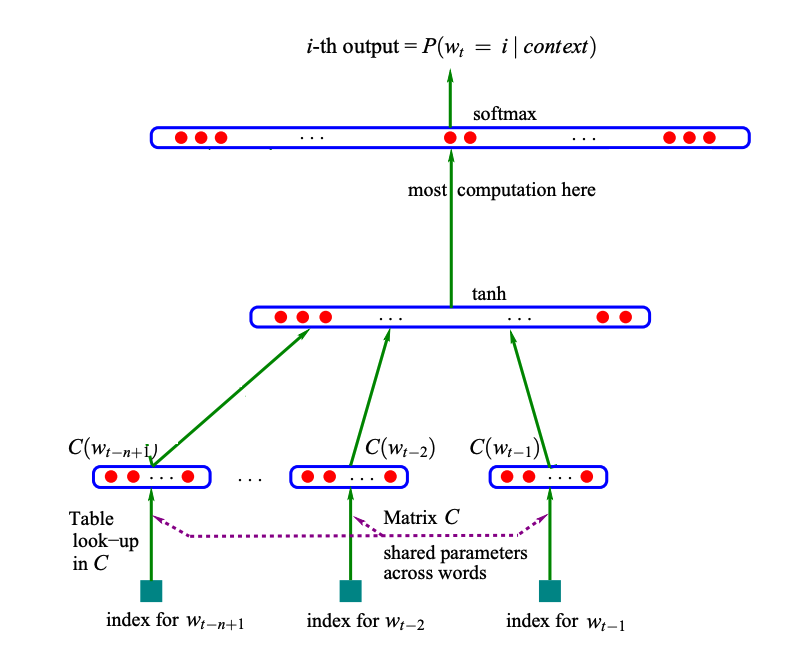

Transformers were introduced in the paper Attention is all you need in 2017 by Vaswani et al, and as the title suggests focusses heavily on a something called attention. This architecture is still very much in use today and is used in text applications applications like ChatGPT, but also in other fields such as vision, audio and other…

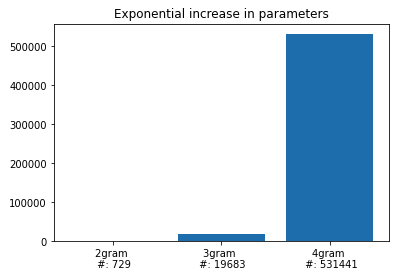

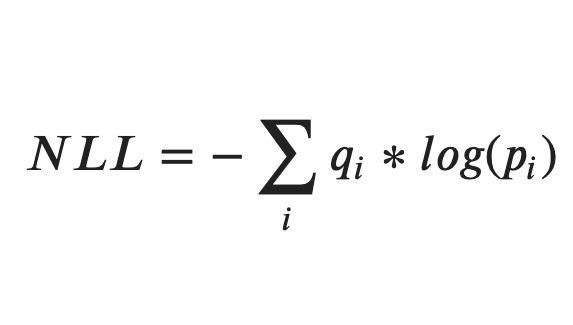

N-gram language models

n-gram

nlp

embeddings

generative

In this post, I’ll discuss a very simple language model: n-grams! To keep things simple we will be…

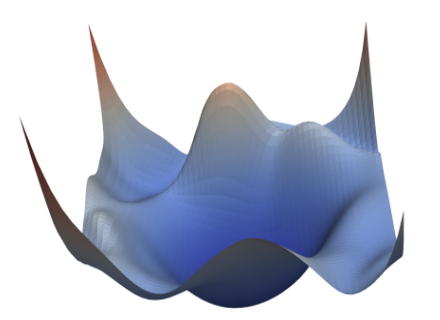

nntrain (4/n): Accelerated optimization

weight decay

momentum

RMSProp

Adam

Resnet

learning rate scheduler

data augmentation

computer vision

In this series, I want to discuss the creation of a small PyTorch based library for training neural networks:

nntrain. It’s based off the excellent part 2 of Practical Deep Learning for Coders by Jeremy Howard, in which from lessons 13 to 18 (roughly)…

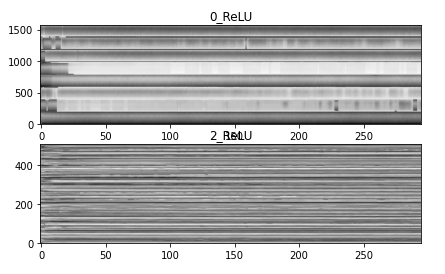

nntrain (3/n): Activations, Initialization and Normalization

convolutions

activations

initialization

batch normalization

computer vision

In this series, I want to discuss the creation of a small PyTorch based library for training neural networks:

nntrain. It’s based off the excellent part 2 of Practical Deep Learning for Coders by Jeremy Howard, in which from lessons 13 to 18 (roughly)…

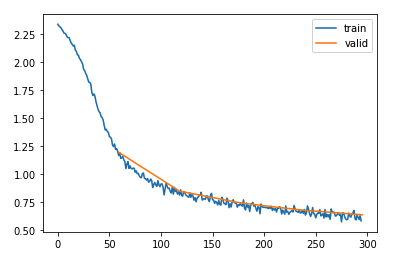

nntrain (2/n): Learner

training

momentum

subscribers

learning rate finder

computer vision

In this series, I want to discuss the creation of a small PyTorch based library for training neural networks:

nntrain. It’s based off the excellent part 2 of Practical Deep Learning for Coders by Jeremy Howard, in which from lessons 13 to 18 (roughly)…

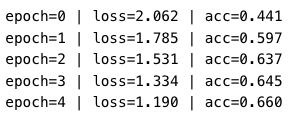

nntrain (1/n): Datasets and Dataloaders

dataloading

training

collation

sampler

In this series, I want to discuss the creation of a small PyTorch based library for training neural networks:

nntrain. It’s based off the excellent part 2 of Practical Deep Learning for Coders by Jeremy Howard, in which from lessons 13 to 18 (roughly)…

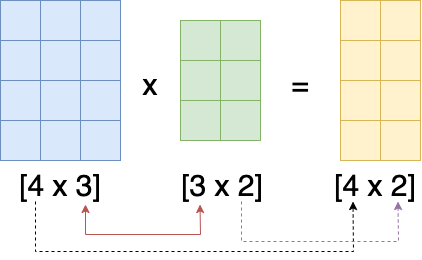

nntrain (0/n): Preliminaries

foundations

PyTorch

nn.Module

In this series, I want to discuss the creation of a small PyTorch based library for training neural networks:

nntrain. It’s based off the excellent part 2 of Practical Deep Learning for Coders by Jeremy Howard, in which from lessons 13 to 18 (roughly)…Introduction to Stable Diffusion - Code

generative

stable diffusion

diffusers

computer vision

In the previous blog post, the main components and some intuition behind Stable…

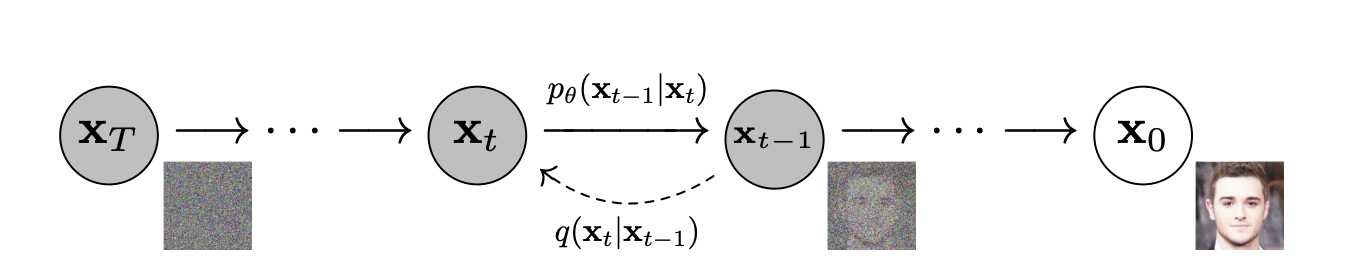

Introduction to Stable Diffusion - Concepts

generative

stable diffusion

diffusers

computer vision

Stable Diffusion, a generative deep learning algorithm developed in 2022, is capable of creating images from prompts. For example, when presented the prompt: A group of people…

No matching items